Scale Without Breaking the System

Scaling is not about doing more. It is about making a system that other people can run without the quality falling apart underneath you.

Why most scaling attempts fail — and why they fail fast

The typical failure pattern goes like this: a clipper is getting consistent results solo. They decide to bring in an editor to increase volume. The editor produces clips that are technically fine but miss the quality standard in ways that are hard to articulate. The pages start underperforming. The clipper spends more time reviewing and correcting than they did when they were doing everything themselves. They either fire the editor and go back to solo or keep going with a system that costs more and produces less.

This happens because the clipper had a standard that lived entirely in their head — something they could execute instinctively but could not explain. Scaling requires taking that instinct and converting it into explicit, documentable standards that someone else can follow. That is the real work of going from solo to operator.

The readiness test — are you actually ready to scale?

Before adding any team members or expanding page count, run through this honestly. If you cannot answer yes to most of these, scaling will multiply problems rather than output.

A useful threshold: if a new editor following your written standards can produce a clip that you would post without changes on their first attempt, your SOPs are ready. If every clip requires significant correction, the SOP is still incomplete — and hiring is premature.

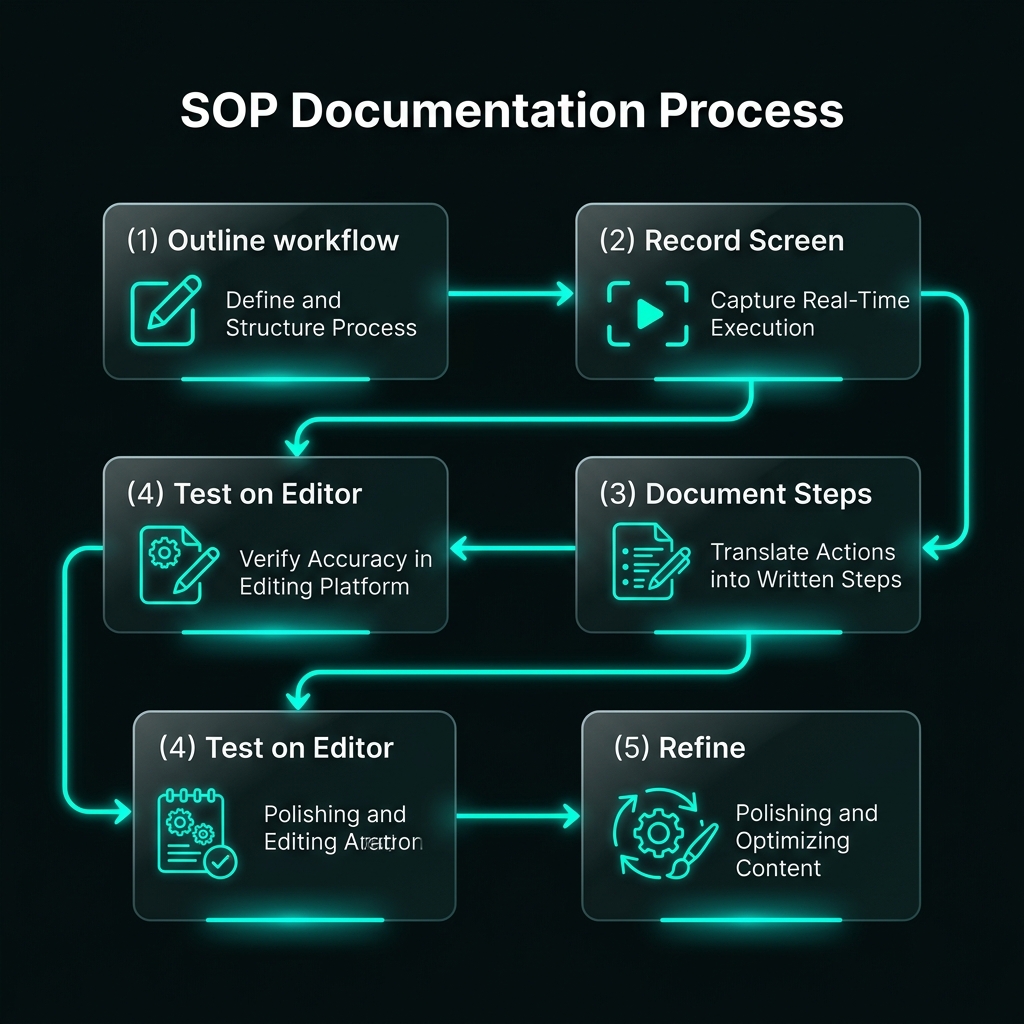

Building SOPs — what they need to include to actually work

An SOP (Standard Operating Procedure) is a documented process for a repeatable task. In clipping, you need SOPs for four core processes: moment selection, editing, posting, and QA. Most clippers who try to write SOPs produce something too vague to be useful — a list of principles rather than executable instructions.

A useful SOP answers specific questions, not general ones. Here is the difference:

Too vague (unusable)

- "Pick the best moments from the stream"

- "Make the editing clean and tight"

- "Write a caption that fits the niche"

- "Check quality before posting"

Specific (usable)

- "Select moments where viewer reaction is visible and lasts 15–45s"

- "Remove pauses over 0.5 seconds; subtitle every word in Arial Bold 70pt white"

- "Caption: hook claim + one context line + 3 hashtags from approved list"

- "Check: completion rate >50% on preview watch, no policy flags visible"

The four SOPs every clipping operation needs

Moment selection

Documents how to identify which parts of source material are worth clipping. Should specify: minimum moment length, what emotional or informational quality makes a moment strong, which source creators to prioritize, and how many moments to identify per hour of source content.

Editing standards

Documents the exact editing process for this page: clip length targets, subtitle font and positioning, silence trim rules, reframing requirements for vertical content, sound design norms, and any visual elements that must appear (logo, color grade, watermark).

Posting protocol

Documents how clips get posted: caption formula, hashtag list, cover image creation process, posting time targets, how to handle the campaign link or tracking pixel if required, and what metadata fields to fill in.

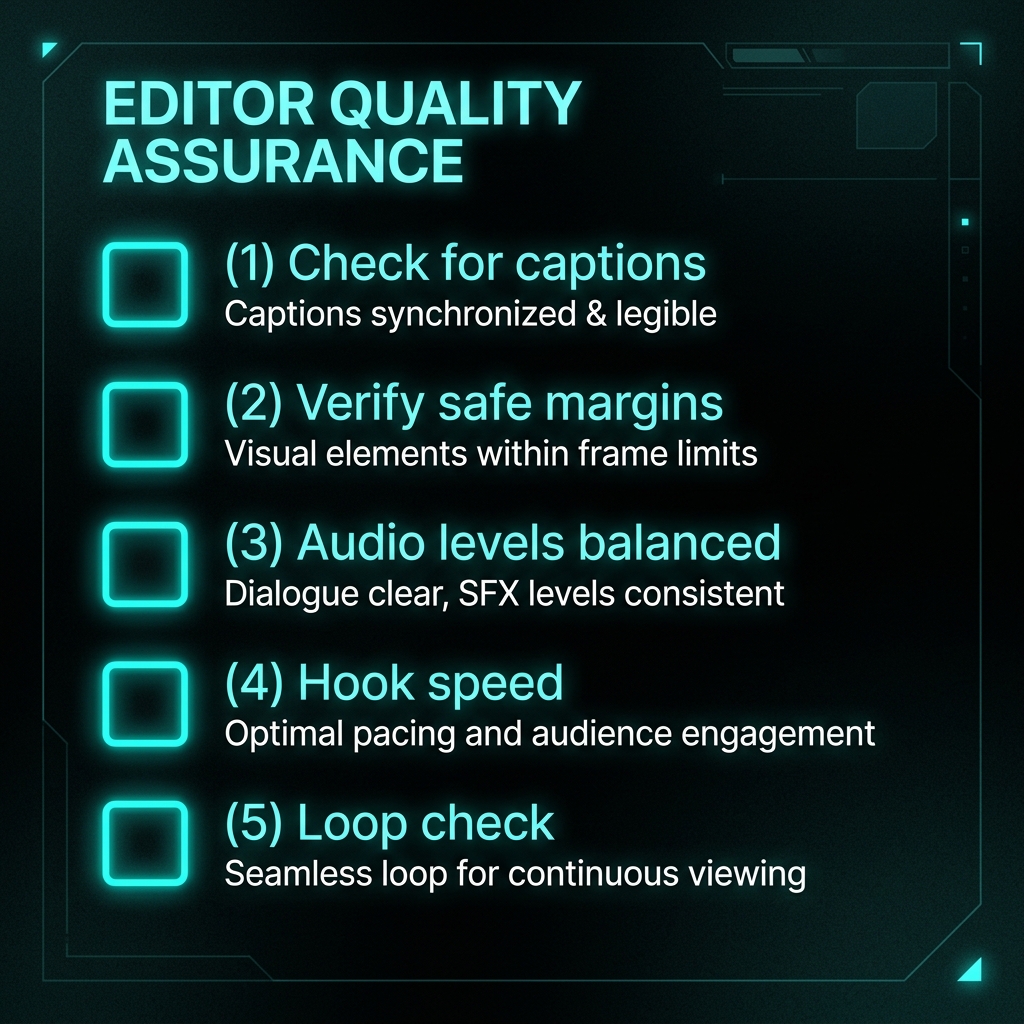

QA checklist

Documents the final checks before a clip is approved to post. Should be a concrete yes/no checklist, not a vague "review for quality." Typically includes: niche fit, editing standard compliance, caption check, campaign compliance check, cover image set.

Team roles — what each person actually does

Most clipping teams are small — 2–5 people covering the key functions. Understanding what each role requires prevents the common mistake of hiring one person and expecting them to do everything.

Editor

Executes moment selection and editing according to SOP. The first hire in most operations — takes the most time-intensive task off the operator's plate.

Reviewer

Checks clips against the QA checklist before they go live. Does not edit — reviews and approves or returns with notes. Often the operator at early scale.

Publisher

Handles the posting process — caption writing, cover images, hashtags, scheduling, and tracking. Can be combined with editor at lower volume.

Operator

Owns everything: campaign selection, SOP maintenance, performance review, payout reconciliation, and team direction. This is you. Do not delegate this role prematurely.

Quality control at scale — why a 20% rejection rate is healthy

Many operators run QA in a way that is effectively a rubber stamp — every clip submitted gets approved because rejecting feels like wasted effort. This is the most common way that team scale degrades page quality over time.

A healthy rejection rate — where approximately 20–30% of submitted clips are returned for rework or discarded — signals that QA is actually working. It also creates a feedback loop that trains editors: they learn what the standard is from what gets rejected, which raises their baseline over time.

The QA checklist — adapt this to your niche and standards

Payout tracking — the record that protects you

At small scale, mental accounting works. At team scale, it fails. Missing a clip submission, losing track of which account posted what, or not reconciling payout discrepancies in time can cost real money — and it erodes operator discipline in ways that compound.

The minimum viable tracking sheet

| Clip title | Campaign | Account | Posted | Raw views | Qual. views | Expected pay | Actual pay | Status |

|---|---|---|---|---|---|---|---|---|

| Streamer reaction - fight | Campaign A | @GamingMoments | Apr 3 | 85,400 | 51,240 | $41.00 | $38.50 | Paid |

| Podcast clip - career take | Campaign B | @PodcastDaily | Apr 4 | 124,000 | 68,200 | $54.56 | Pending | Pending |

| Hip-hop freestyle drop | Campaign A | @HiphopClips | Apr 5 | 34,200 | 18,910 | $15.13 | — | Submitted |

Maintain this for every clip posted to every campaign. When payout discrepancies arise — and they will — your tracking sheet is the evidence. Without it, disputed payouts are very difficult to contest.

The economics of scaling — what you are actually building toward

Scaling is only worth doing if the economics work at team level. A solo clipper earning $1,500 per month who hires an editor for $800 per month is only ahead if the editor's output increases total earnings by more than $800. That requires: higher clip volume, maintained page quality, and access to campaigns that support the increased output.

Simple scale economics check

The risk is that adding an editor reduces clip quality, which lowers the per-clip average. If the per-clip average drops from $25 to $18 because of quality dilution, the economics reverse — the editor costs more than they add. This is exactly why SOPs and QA exist: to protect per-clip performance as volume increases.

When not to hire — and what to fix instead

You have the model. Now use the tools.

The academy covers the foundation. Compare it against what is actually happening in the market — live campaigns, platform conditions, and clipper discussions — in the community and the platform directory.

Enter the Community